"The ________ Generation” has long been one of those red-flag phrases, a strong indicator that you may be about to encounter serious bullshit. There are occasions when it makes sense to group together people born during a specified period of 10 to 20 years, but those occasions are fairly rare and make up a vanishingly small part of the usage of the concept.

First, there is the practice of making a sweeping statement about a "generation" when one is actually making a claim about a trend. This isn't just wrong; it is the opposite of right. The very concept of a generation implies a relatively stable state of affairs for a given group of people over an extended period of time. If people born in 1991 are more likely to do something that people born in 1992 and people born in 1992 are more likely to do it than people born in 1993 and so on, discussing the behavior in terms of a generation makes no sense whatsoever.

We see this constantly in articles about "the millennial generation" (and while we are on the subject, when you see "the millennial generation," you can replace "may be about to encounter serious bullshit" with "are almost certainly about to encounter serious bullshit"). Often these "What's wrong with millennial's?" think pieces manage multiple layers of crap, taking a trend that is not actually a trend and then mislabeling it as a trait of a generation that's not a generation.

How often does the very concept of a generation make sense? Think about what we're saying when we use the term. In order for it to be meaningful, people born in a given 10 to 20 year interval have to have more in common with each other than with people in the preceding and following generations, even in cases where the inter-generational age difference is less than the intra-generational age difference.

Consider the conditions where that would be a reasonable assumption. You would generally need society to be at one extreme for an extended period of time, then suddenly swing to another. You can certainly find big events that produce this kind of change. In Europe, for instance, the first world war marked a clear dividing line for the generations.

(It is important to note that the term "clear" is somewhat relative here. There is always going to be a certain fuzziness with cutoff points when talking about generations, even with the most abrupt shifts. Societies don't change overnight and individuals seldom fall into the groups. Nonetheless, there are cases where the idea of a dividing line is at least a useful fiction.)

In terms of living Americans, what periods can we meaningfully associate with distinct generations? I'd argue that there are only two: those who spent a significant portion of their formative years during the Depression and WWII; and those who came of age in the Post-War/Youth Movement/Vietnam era.

Obviously, there are all sorts of caveats that should be made here, but the idea that Americans born in the mid-20s and mid-30s would share some common framework is a justifiable assumption, as is the idea that those born in the mid-40s and mid-50s would as well. Perhaps more importantly, it is also reasonable to talk about the sharp differences between people born in the mid-30s and the mid-40s.

There are a lot of interesting insights you can derive from looking at these two generations, but, as far as I can see, attempts to arbitrarily group Americans born after, say, 1958 (which would have them turning 18 after the fall of Saigon) is largely a waste of time and is often profoundly misleading. The world continues to change rapidly, just not in a way that lends itself toward simple labels and categories.

Comments, observations and thoughts from two bloggers on applied statistics, higher education and epidemiology. Joseph is an associate professor. Mark is a professional statistician and former math teacher.

Thursday, December 22, 2016

Wednesday, December 21, 2016

Tuesday, December 20, 2016

Two Napoleons and a Potemkin village

I wish I remembered the exact context, but a few years ago I was listening to a radio interview on the subject of delusion. At one point, the reporter asked "what happens when two mental patients who both think they are Napoleon meet each other?" The expert replied, "After careful consideration, both patients come to the correct conclusion: the other guy is crazy."

That anecdote came to mind recently when reading this piece in New York magazine by Benjamin Wallace profiling the troubles at Hyperloop One. [Longtime readers will remember this is not the first time we've called out New York's Hyperloop coverage.]

There is, course, another "hyperloop" company in the news. Hyperloop Transportation Technologies, but as Shervin Pishevar (venture capitalist and co-founder of Hyperloop One) told the generally credulous reporter, the other company didn't really have a serious chance of building anything of consequence.

The dismissive tone might have had a bit more resonance if it hadn't been followed almost immediately by this description of how Hyperloop One prepared for its big moment in the sun

[Emphasis added]

As if this weren't bad enough, the article then goes on to quote engineers for the company admitting what many of us have been saying all along: that the incredibly over-hyped demonstration was entirely limited to the parts of the technology everyone already knew worked. Rather than being a test, it was, in reality, little more than a glorified science fair exhibit.

In case I was a bit obscure in the title...

That anecdote came to mind recently when reading this piece in New York magazine by Benjamin Wallace profiling the troubles at Hyperloop One. [Longtime readers will remember this is not the first time we've called out New York's Hyperloop coverage.]

There is, course, another "hyperloop" company in the news. Hyperloop Transportation Technologies, but as Shervin Pishevar (venture capitalist and co-founder of Hyperloop One) told the generally credulous reporter, the other company didn't really have a serious chance of building anything of consequence.

[a] crowdsourced, volunteer-staffed company with a confusingly similar name, Hyperloop Transportation Technologies. It was perhaps not a serious long-term threat — the company was run by a former Uber driver and a former Italian MTV VJ — but Hyperloop Transportation Technologies had a few months’ head start over Hyperloop Technologies, and the amateurish nature of his rivals didn’t help Pishevar in the credibility game, which he recognized was, at this point, the entire game.

The dismissive tone might have had a bit more resonance if it hadn't been followed almost immediately by this description of how Hyperloop One prepared for its big moment in the sun

[Emphasis added]

Pishevar knew the power of a well-placed media exclusive to lubricate the creation of something from nothing. In fact, he had been keeping Forbes technology editor Bruce Upbin up to date on every development of his new venture since its infancy. “Shervin mentioned the Forbes piece early, maybe even the first day I met him,” BamBrogan remembers. By early 2015, Pishevar’s company was a few steps further along, having hired a general counsel (Pishevar’s brother Afshin, who was bunking in BamBrogan’s spare bedroom) and raised $7.5 million, primarily from Pishevar’s Sherpa Capital and from Formation 8, a VC firm run by the investor Joe Lonsdale. But the company was still in BamBrogan’s garage, with no health insurance, no company insurance, no HR processes, no website, and no office space. The only thing holding it together, at this point, was Pishevar’s estimable sales skills. With a big Forbes story now slated for imminent publication, the company was in a race to acquire enough of a patina of substantiality to merit prominent coverage in America’s most famous business magazine. “It was crazy,” BamBrogan recalls. “We’re spending time finding the right industrial space that we want to grow into but also that we can do for this Forbes shoot.”

A recently hired director of operations knew the landlord of a large campus in downtown L.A., and at the end of the month, BamBrogan and his handful of colleagues moved into a sliver of the space, a 6,500-square-foot former ice factory, before they had secured a lease. With the magazine deadline looming, the skeleton crew were unrolling carpets, BamBrogan was making repeated trips to Ikea in his Audi sedan to buy 16 Vika Amon tables and 64 Vika Adil legs, and the company was buying 25 computers and 50 monitors. Some of the computers had only one graphics card and couldn’t actually run two monitors, but the superfluous equipment beefed up the apparent size of the company. The day of the shoot, BamBrogan and his co-workers scheduled a flurry of job interviews in the office so that more people would be around.

As if this weren't bad enough, the article then goes on to quote engineers for the company admitting what many of us have been saying all along: that the incredibly over-hyped demonstration was entirely limited to the parts of the technology everyone already knew worked. Rather than being a test, it was, in reality, little more than a glorified science fair exhibit.

In case I was a bit obscure in the title...

In politics and economics, a Potemkin village (also Potyomkin village, derived from the Russian: Потёмкинские деревни, Russian pronunciation: [pɐˈtʲɵmkʲɪnskʲɪɪ dʲɪˈrʲɛvnʲɪ] Potyomkinskiye derevni) is any construction (literal or figurative) built solely to deceive others into thinking that a situation is better than it really is. The term comes from stories of a fake portable village, built only to impress Empress Catherine II during her journey to Crimea in 1787. While some modern historians claim accounts of this portable village are exaggerated, the original story was that Grigory Potemkin erected the fake portable settlement along the banks of the Dnieper River in order to fool the Russian Empress.

Monday, December 19, 2016

Charter schools and astroturf

There's nothing particularly exceptional about the following story – if anything, it's pretty much par for the course – but it is a reminder of something that we all know on some level but often fail to acknowledge. There is a huge imbalance in the per-school money available for charters versus traditional public schools. This would always be a concern but under the current system it is nothing short of a fatal flaw. Today, schools with large lobbying budgets for getting funding approved and large advertising budgets for attracting students (particularly those who are likely to improve the schools' test scores) are at such an advantage that they can easily push other schools into a death spiral of budget cuts and dwindling enrollment.

If we want to have charters as a part of a functioning education system, we need to reform that system in a way that minimizes the impact of deep pockets rather than amplifies it.

If we want to have charters as a part of a functioning education system, we need to reform that system in a way that minimizes the impact of deep pockets rather than amplifies it.

The cost to bus charter school students and advocates to rallies: $87,870.

The cost of providing them food from Subway: $14,040.

The cost of launching a media blitz including a new wave of television advertisements after state legislators failed to recommend funding new charter schools: $300,429.

The impact on students “trapped in failing schools” if this campaign drives funding to greatly expand charter school enrollment: Priceless, says Families for Excellent Schools, the nonprofit organization behind the effort.

According to spending reports filed with the Office of State Ethics Monday, the organization spent $413,000 in April — more than double what the organization spent during the first three months of the legislative session. This brings the organization’s spending to $667,000 so far this year. Add in what other groups advocating for charter schools are spending, and the total nears $1 million.

Saturday, December 17, 2016

The future of Faraday Future is questionable

Exceptional work by Raphael Orlove of Jalopnik.(the spirit of Gawker lives on). The whole article is highly recommended.

Sources close to Faraday Future, including suppliers, contractors, current, prospective and ex-employees all spoke to Jalopnik over a number of weeks on conditions of anonymity and said the money has been M.I.A., the plans are absurd and the organization verges on the dysfunctional.

A year ago, things seemed very different. In late November of 2015, Faraday Future burst onto the scene with promises as big as its name was mysterious.

Staffed by prominent industry figures poached from companies like Tesla, Apple, Ferrari and BMW, FF made bold, unprecedented promises: an electric car that could not only drive itself but connect to its owner’s smartphone and learn from their daily habits to become the ultimate personalized vehicle. And if ownership didn’t suit their lifestyle, fine; the company was eager to expand into ride-sharing and autonomous fleet services.

With a $1 billion facility in Nevada, the company promised production by 2017. Forget what you know about cars, the teaser videos proclaimed. A revolution is coming and we would see it at the CES trade show in Las Vegas. Everyone anticipated an actual car that could live up to these claims.

Then January and CES rolled around and the company revealed that yes, that wild rocket-looking supercar that leaked onto the internet via an app really was Faraday Future’s show car. But not its actual production car. That would come later, the company swore after an embarrassing debut that laid the hype and the buzzwords on thick but had seemingly little to back it up. In the meantime the company promised a “skateboard” modular electric platform that could be adapted to suit several different body styles.

But everything would be fine, right? After all, FF was getting $335 million in state tax incentives and abatements from Nevada for its plant, and it was sponsoring a Formula E team. And in the company’s own words, it would do for cars what the iPhone did for communications in 2007. And Faraday Future is funded by Jia Yueting, a tech mogul in China known for starting the country’s first paid video streaming service. It’s often nicknamed “The Netflix of China,” and it brought Jia the billions he needed to start a whole tech empire, selling everything from smartphones to TVs to cars.

What could go wrong?

That was in January. FF spent the next several months in the news over and over again, almost always for reasons no company wants to be in the news. There was the lag on payments to the factory’s construction company, the senior staffers jumping ship, the confusing debut of a seemingly competing car from the company helmed by its principal backer, the lawsuits from a supplier and a landlord who said they weren’t getting paid, the work stoppage on the factory, the state officials in Nevada who said Jia didn’t have as much money as he claimed (something that Jia denied in a haters-are-my-motivators statement), and the fact that leaders in that state copped to never really knowing much about FF’s financials before approving that incentive package.

...

I wish I could say this in front of every sentence I write about Faraday Future, but from everything I’ve seen there is good and serious engineering work getting done at the company.

If anything, Faraday Future has too many people working on one of the most interesting cars we’ve seen in years, engineers crammed computer to computer, even on fold up-picnic tables as one anonymous interviewee told Jalopnik. All-electric, eyes on autonomy, with incredible performance and design. “There’s a lot of good people there,” one source noted. “That’s the worst part.”

But you can’t have this engineering side without a solid business to back it up, and the good work at Faraday Future seems like it has been constantly undone by the unrealistic demands of its top leadership and a money gulf across the Pacific.

Friday, December 16, 2016

Despite what Munroe says about the industry group, it's still less effective at lobbying than Disney's

Remember this?

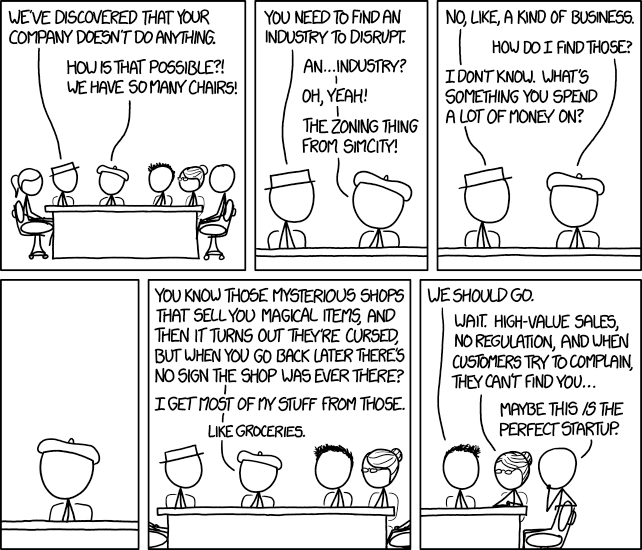

I've been meaning to work up a thread on magical thinking in business culture and journalism. Leave it to XKCD to get there first.

The thinking of business writers has become so muddled and, in places, so overtly mystical that the important fundamental drivers are completely lost in the discussion. Words like "disruptor" or "transformative" have such tremendous emotional resonance for the writers (and investors) that they blind them to the underlying business forces.

I've been meaning to work up a thread on magical thinking in business culture and journalism. Leave it to XKCD to get there first.

Thursday, December 15, 2016

"Disruption" is now officially a dead metaphor

DYING METAPHORS. A newly invented metaphor assists thought by evoking a visual image, while on the other hand a metaphor which is technically ‘dead’ (e. g. iron resolution) has in effect reverted to being an ordinary word and can generally be used without loss of vividness. But in between these two classes there is a huge dump of worn-out metaphors which have lost all evocative power and are merely used because they save people the trouble of inventing phrases for themselves. Examples are: Ring the changes on, take up the cudgel for, toe the line, ride roughshod over, stand shoulder to shoulder with, play into the hands of, no axe to grind, grist to the mill, fishing in troubled waters, on the order of the day, Achilles’ heel, swan song, hotbed. Many of these are used without knowledge of their meaning (what is a ‘rift’, for instance?), and incompatible metaphors are frequently mixed, a sure sign that the writer is not interested in what he is saying. Some metaphors now current have been twisted out of their original meaning without those who use them even being aware of the fact. For example, toe the line is sometimes written as tow the line. Another example is the hammer and the anvil, now always used with the implication that the anvil gets the worst of it. In real life it is always the anvil that breaks the hammer, never the other way about: a writer who stopped to think what he was saying would avoid perverting the original phrase.We've been heading toward this for a long time. From the beginning, the idea of disruption never explained nearly as much as it was supposed to. There were always as many exceptions as cases and much of the appeal of the idea could be attributed to the way it played into popular narratives about visionary innovators.

George Orwell

By now, the term has been so overused that it has lost all meaning. Here's Jill Lepore writing for the New Yorker.

Ever since “The Innovator’s Dilemma,” everyone is either disrupting or being disrupted. There are disruption consultants, disruption conferences, and disruption seminars. This fall, the University of Southern California is opening a new program: “The degree is in disruption,” the university announced. “Disrupt or be disrupted,” the venture capitalist Josh Linkner warns in a new book, “The Road to Reinvention,” in which he argues that “fickle consumer trends, friction-free markets, and political unrest,” along with “dizzying speed, exponential complexity, and mind-numbing technology advances,” mean that the time has come to panic as you’ve never panicked before. Larry Downes and Paul Nunes, who blog for Forbes, insist that we have entered a new and even scarier stage: “big bang disruption.” “This isn’t disruptive innovation,” they warn. “It’s devastating innovation.”

Obviously, sense has been draining away for a long time, but the term officially flat-lined when the heads of AT&T and Time Warner headed to DC to defend the indefensible. From the must-read Gizmodo piece by Michael Nunez.

In front of the Senate subcommittee today, AT&T CEO Randall Stephenson brazenly dismissed concerns of potentially anticompetitive behavior. The bespectacled executive, according to a New York Times report, told lawmakers that the merger would “disrupt the entrenched pay-TV models” and give customers more options.

The truth is a little more complicated than that. AT&T is already the second-largest US telecommunications company (with 133.3 million subscribers) and the largest pay-TV service in the US and the world. If it merged with Time Warner, the second-largest broadband provider and third-largest video provider in the US, it would create a media conglomerate with unspeakable power. Critics say it would be a conglomerate that many companies just couldn’t compete with.

We are truly into Newspeak territory here. The sole purpose of this type of mergers is to entrench position and prevent the industry from being disrupted. Those at the top quite accurately view disruption as a serious and possibly existential threat to the status quo. If you can now use the term to describe a mega-merger, it has no meaning left at all.

Wednesday, December 14, 2016

Vanishing overhead bins in the upsell economy

From Matt Novak

I don't have access to the actual numbers, of course, but as a former marketing guy, I strongly suspect that real money here is not coming from that 20 bucks or so you pay to put a carry-on bag in the overhead compartment. Instead, it comes from the way that policies like this screw with consumers' decision-making ability.

This works on at least three levels:

1. Fees make pricing more opaque. Sometimes, additional costs may be completely unexpected – you go to pay your bill and find its much larger than what you thought you had agreed to – but even when you know something is coming, those fees make it difficult to know exactly how much you will be handing over.

2. These policies greatly complicate the calculations consumers need to perform. Despite what you might occasionally hear from some freshwater economist, the human brain has finite computing power. If the computations required to determine the optimal purchase get too long and involved, people are more likely to resort to shortcuts or simply start making mistakes.

3. A crappy product is the first step in the road to upgrade riches. This is not all that different from classic bait-and-switch scam we are all familiar with, but the potential payoff is much greater. Tiered systems offer enormous potential for convincing people to pay way too much money for things they don't particularly want. By making that bottom level product sufficiently unattractive, you can get a lot of customers into the upgrade habit. Just ask your local cable company.

United has announced a “new tier” of ticket, as the company calls it. The airline’s cheapest flights will now be called Basic Economy, and if you want to store something in the overhead bin, that’ll cost you extra. Passengers will be able to bring a carry-on that fits underneath the seat in front of them. But don’t even think about putting something above you. That’s for people who paid more.

Of course, the airline is positioning this move as providing “more options” for customers. But it seems like providing more “choice” always comes with a fee for something customers used to get for free.

“Customers have told us that they want more choice and Basic Economy delivers just that,” Julia Haywood, executive vice president at United said in a hilarious news release. “By offering low fares while also offering the experience of traveling on our outstanding network, with a variety of onboard amenities and great customer service, we are giving our customers an additional travel option from what United offers today.”

Want to hear another fun aspect of “choice” that Basic Economy provides? Passengers won’t get an assigned seat until the day they depart.

I don't have access to the actual numbers, of course, but as a former marketing guy, I strongly suspect that real money here is not coming from that 20 bucks or so you pay to put a carry-on bag in the overhead compartment. Instead, it comes from the way that policies like this screw with consumers' decision-making ability.

This works on at least three levels:

1. Fees make pricing more opaque. Sometimes, additional costs may be completely unexpected – you go to pay your bill and find its much larger than what you thought you had agreed to – but even when you know something is coming, those fees make it difficult to know exactly how much you will be handing over.

2. These policies greatly complicate the calculations consumers need to perform. Despite what you might occasionally hear from some freshwater economist, the human brain has finite computing power. If the computations required to determine the optimal purchase get too long and involved, people are more likely to resort to shortcuts or simply start making mistakes.

3. A crappy product is the first step in the road to upgrade riches. This is not all that different from classic bait-and-switch scam we are all familiar with, but the potential payoff is much greater. Tiered systems offer enormous potential for convincing people to pay way too much money for things they don't particularly want. By making that bottom level product sufficiently unattractive, you can get a lot of customers into the upgrade habit. Just ask your local cable company.

Tuesday, December 13, 2016

Thomas Friedman blogging IV – – good tech is friendly tech

Though it is a bit of a side issue, there is another flaw in Thomas Friedman's argument that I'd like to address, as much for future reference as anything else (I plan on getting back to some of these larger questions).

As is been noted previously by others, Friedman's technology is a big, generic, undefined thing. A mysterious godlike force which must be accommodated lest ye be afflicted with Luddite cooties. Commentators like Friedman seldom think of technology as a set of tools, but that's exactly the appropriate framework.

The idea that human adaptability needs to be proportional to technological change is wrong in multiple ways. Advances in technology should produce tools that are more powerful, cheaper, and generally easier to use. All other things being equal, better tech should require less adaptability than less sophisticated tech. Whether we are talking about automatic transmissions or USB ports or natural language processing, the objective is to make things easier.

It's important to step back for a moment at this point and distinguish between the adapting that an individual has to do and what a society has to do collectively. Even the most tech savvy among us struggle now and then with a new development, but if we really are talking about an advance, the learning curve on the new technology should be better than the learning curve on the old.

More excellent work from Adam Conover

Yet another example of a comedian producing better journalism than most journalists.

Monday, December 12, 2016

Thomas Friedman blogging III – – a few words from Matt Novak

I don't buy all of Novak's take on this (more on that later if I get around to it), but, on the whole, this is an exceptionally sharp analysis:

The adoption rates of early and mid-20th Century consumer technology are even more impressive when you consider infrastructure. I'd argue that the percentage of American households with mechanical refrigerators in 1939 is, in many ways, less relevant than the percentage of electrified households with refrigerators that year. By the same token, a large part of the country didn't get TV stations until the mid-50s and yet we still hit 59%. Viewed this way, consumers were considerably more eager to adopt new technology in the mid-2oth Century than they are today.

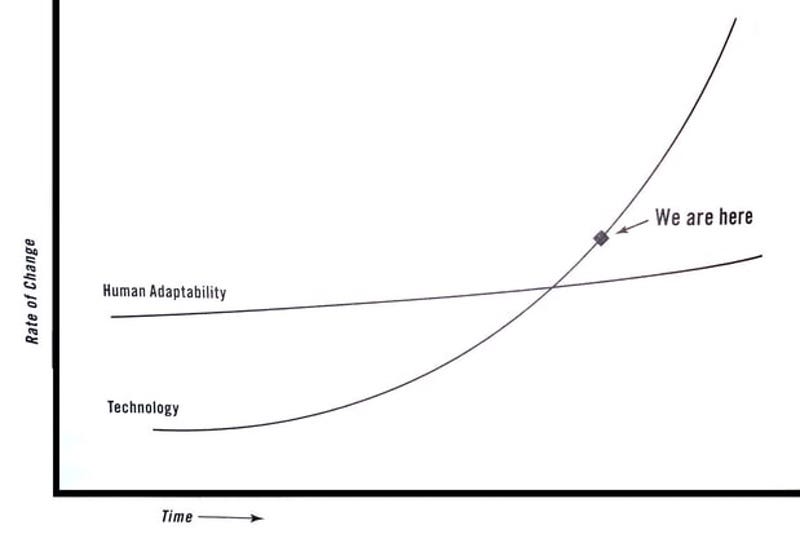

Rolling Stone’s Matt Taibbi first flagged the graph in a blog post today. The graph shows technology (which is never defined) and its rate of change (which is never defined) and human adaptability (which is never defined). It’s the kind of thing you might see scrawled in feces in Ted Kaczynski’s prison cell but it’s now been conveniently committed to paper and given a much wider audience.

...

The truth is that technological adoption isn’t necessarily speeding up. Just look at consumer goods like television. In 1950 just 8 percent of Americans had a TV. Four years later, in 1954, a whopping 59 percent of American households had a TV. Here we are on the cusp on 2017 and I’m having a hard time thinking of any consumer technology that made any comparable jump since 2012.

Or let’s go back further. The Great Depression was a desperate time for most Americans. But technological leaps didn’t stop. Look at the mechanical refrigerator as another example of rapid change in a relatively short period of time. Just 8 percent of American households had a fridge in 1930. By the end of the decade roughly 44 percent had one. People much smarter than myself have argued that refrigeration did more to shape the United States than most other technologies of the 20th century. Yes, smartphones are revolutionary. But refrigeration tech arguably changed America as much, if not more.

The adoption rates of early and mid-20th Century consumer technology are even more impressive when you consider infrastructure. I'd argue that the percentage of American households with mechanical refrigerators in 1939 is, in many ways, less relevant than the percentage of electrified households with refrigerators that year. By the same token, a large part of the country didn't get TV stations until the mid-50s and yet we still hit 59%. Viewed this way, consumers were considerably more eager to adopt new technology in the mid-2oth Century than they are today.

Friday, December 9, 2016

Cracked's interesting but self-refuting argument

"Why Pop Science Matters - Lowest Common Dominator"

I've got some thoughts on this, but to avoid any

spoilers, we'll talk about it after the break.

Thursday, December 8, 2016

Essential tech reporting at Gizmodo [Facebook edition]

William Turton has a series that you really need to be following. The first and the third articles address Facebook's fake news problem. The second describes how the company managed to spin a failed test of a major initiative as an unqualified success.

In a related article, Michael Nunez describes how the company slow-walked its response to the fake news problem due to fear of conservative backlash.

Ordinal wealth and the bigger pie fallacy

Picking up on Joseph's recent post (which in turn picked up on Jared Bernstein's earlier post), specifically this part:

A few years ago, we ran a post discussing different ways of viewing wealth. One of the approaches we covered was ordinal wealth, the idea that, in some situations, total wealth might be less important (or a less useful metric) than wealth-rank. In terms of social status, political power, and personal satisfaction, being the richest man in town with say $10 million in the bank might be preferable to having $15 million but not breaking the top five.

There is no obvious reason why absolute wealth should be a better central metric than ordinal wealth and other relative measures – you can almost certainly find cases where each is appropriate – but if we do allow for the possibility that relative measures might sometimes work better, all sorts of cherished economic truths start to look fairly shaky.

Maybe I'm missing something, but pretty much all the assurances we've heard about how enlightened self interest will keep us on the right track seem to assume that rational actors will seek to optimize absolute wealth. If the rich and powerful are more concerned with maximizing relative position, it's difficult to see where that enlightenment would come in.

Let's focus on the first sentence. This is a completely conventional assertion and it's entirely valid if you make certain standard (and always implicit) assumptions about what it means to benefit from a transaction. Unfortunately, the reasoning does not stand well when wee start tweaking that assumption.

When the benefits of trade are broadly spread then everyone benefits. But capturing all of the benefits and then using that political power to seize additional benefits is a great way to get very powerful but it runs the risk of undermining the political calculation. After all, if trade is the way that the "rich get richer" and the "poor get even less" then it starts to look like a very bad deal.

A few years ago, we ran a post discussing different ways of viewing wealth. One of the approaches we covered was ordinal wealth, the idea that, in some situations, total wealth might be less important (or a less useful metric) than wealth-rank. In terms of social status, political power, and personal satisfaction, being the richest man in town with say $10 million in the bank might be preferable to having $15 million but not breaking the top five.

There is no obvious reason why absolute wealth should be a better central metric than ordinal wealth and other relative measures – you can almost certainly find cases where each is appropriate – but if we do allow for the possibility that relative measures might sometimes work better, all sorts of cherished economic truths start to look fairly shaky.

Maybe I'm missing something, but pretty much all the assurances we've heard about how enlightened self interest will keep us on the right track seem to assume that rational actors will seek to optimize absolute wealth. If the rich and powerful are more concerned with maximizing relative position, it's difficult to see where that enlightenment would come in.

Wednesday, December 7, 2016

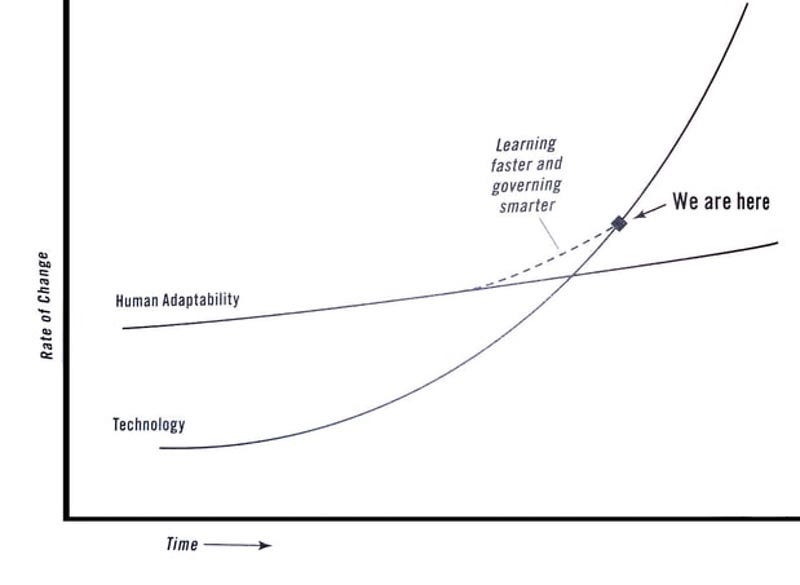

Thomas Friedman blogging II – – failing to learn about "learning faster"

Following up on the previous post, here is a bit of background on the recent, widely-mocked graphs from Thomas Friedman. Though it was the first that prompted the most derision, the second graph might actually merit a bit more attention.

At this point, it is helpful to have a bit of background on Friedman's ideas about modern pedagogy. Friedman has enormous faith in the power of technology to revolutionize education. Unfortunately, he appears to have no idea how antiquated his view of educational technology is, or how badly his ideas have fared in the past. Here's what we had to say on the subject back in 2013.

At this point, it is helpful to have a bit of background on Friedman's ideas about modern pedagogy. Friedman has enormous faith in the power of technology to revolutionize education. Unfortunately, he appears to have no idea how antiquated his view of educational technology is, or how badly his ideas have fared in the past. Here's what we had to say on the subject back in 2013.

75 years of progress

While pulling together some material for a MOOC thread, I came across these two passages that illustrated how old much of today's cutting edge educational thinking really is.

First from a 1938 book on education*:

" Experts in given fields broadcast lessons for pupils within the many schoolrooms of the public school system, asking questions, suggesting readings, making assignments, and conducting test. This mechanize is education and leaves the local teacher only the tasks of preparing for the broadcast and keeping order in the classroom."And this recent entry from Thomas Friedman.

For relatively little money, the U.S. could rent space in an Egyptian village, install two dozen computers and high-speed satellite Internet access, hire a local teacher as a facilitator, and invite in any Egyptian who wanted to take online courses with the best professors in the world, subtitled in Arabic.I know I've made this point before, but there are a lot of relevant precedents to the MOOCs, and we would have a more productive discussion (and be better protected against false starts and hucksters) if people like Friedman would take some time to study up on the history of the subject before writing their next column.

* If you have any interest in the MOOC debate, you really ought to read this Wikipedia article on Distance Learning.

Subscribe to:

Comments (Atom)